Craig Huitema and Soni Jiandani blogged about Cisco’s latest ASIC innovations for the Nexus 9K platforms and IDC did a write up and video. In this blog, I’ll expand on one component of the innovations, intelligent buffering. First let’s look at how switching ASICs maybe designed today. Most switching ASICs are built with on-chip buffer memory and/or off-chip buffer memory. The on-chip buffer size tends to differ from one ASIC type to another, and obviously, the buffer size tends to be limited by the die size and cost. Thus some designs leverage off-chip buffer to complement on-chip buffer but this may not be the most efficient way of designing and architecting an ASIC/switch.

This will lead us to another critical point: how can the switch ASIC handle TCP congestion control as well as the buffering impact to long-lived TCP and incast/microburst packets (a sudden spike in the amount of data going into the buffer due to lots of sources sending data to a particular output simultaneously. Some examples of that IP based storage as the object maybe spread across multiple nodes or search queries where a single request may go out on hundreds or thousands of nodes. In both scenarios the TCP congestion control doesn’t apply because it happens so quickly).

In this video, Tom Edsall summarizes this phenomenon and the challenges behind it.

Now, what have we done in our latest switch ASIC innovations to tackle these challenges? First of all, the latest ASIC innovations are based on 16nm fabrication technology – Industry’s first for switch ASICs compared to 28nm offerings from merchant vendors. This allowed us to bring more capabilities and scale while keeping cost under control and lowering power consumption.

The other innovations are around addressing network congestion challenges. How to support different types of traffic that will traverse the fabric like distributed IP-based storage, microburst, big data, etc. without performance impact?

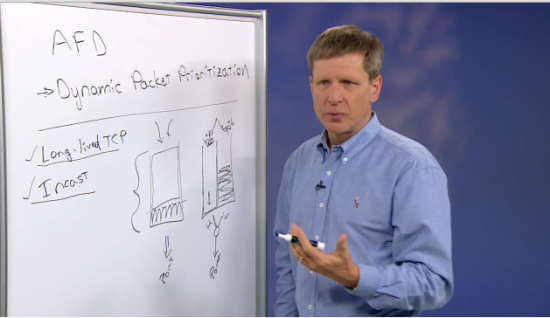

Tom Edsall illustrates here two things to alleviate this challenge and the importance of intelligent buffering:

- Dynamic Packet Prioritization (DPP) – prioritizes small flows over large flows in transmit scheduling so that mice flows will be guaranteed for transmission without suffering packet losses due to buffer exhaustion or additional latency due to excessive queueing.

- Approximate Fair Drop (AFD) – introduces flow size awareness and fairness to the early drop congestion avoidance mechanism. Unlike WRED which treats all traffic flows equally within a given class, AFD is capable of differentiating large flows vs small flows (using elephant trap) in a class, and submit large (elephant) flows to the early-drop buffer threshold while leaving enough buffer headroom for small (mice) flows.

In addition, this Miercom test report (Cisco Systems Speeding Applications in Data Center Networks) puts the traditional simple buffer implementation and our new algorithm-based intelligent buffer architecture into the test with real-world traffic workloads, and proves that our intelligent buffer management based on DPP and AFD provides a better solution than just simply increasing the buffer size.

Another test report by Miercom around Big Data (Cisco Network Switch Impact on “Big Data” Hadoop-Cluster Data Processing) that compares the hadoop-cluster performance with switches of differing characteristics.

What’s in it for customers? For one they have a two year advantage to build a differentiated infrastructure that allows them to advance their business goals and outcome! They’ll have best in class infrastructure that can support various application and traffic types. App developers and DevOps teams can deliver the app performance at cloud scale providing ultimate user experience – an infrastructure for the long run.

Rami Rammaha – this is a great solution for the mid-1990s. You should not confuse people with such titles. These switches are not being used in any cloud scale deployments. And private DCs are fast disappearing and have no need for expensive hardware or scale. Cloud washing is also a decade old. Just talk about what the switch is and networks without bringing in developers, applications and DevOps. They are confusing and just marketing buzzwords in this context.

Me and Heh forgot to mention that we work for Arista. Big Buffers Rule!

@ Solutions for 1990s – no marketing just real engineering work for real problems faced by customers!

Yes these are real problems caused by Cisco’s lack of innovation and lack of understanding of cloud. Your cloud team is pathetic. Stick to hardware and don’t cloudwash.

Cisco 9K uses Broadcom Chips having similar limitations as all other vendors. Clever algorithms can only solve extremely small congestion issues. Adding too much buffer increases latency too. Having different port speeds on a switch will always lead to tail drops.. no real way around that.. some manipulation is possible but not too much. What Cisco is trying is to confuse the lay buyers further. The people in this btz can see through the lame logics.

@ Heh – Please see the IDC report describing the specifics and capabilities of Cisco’s ASICs http://www.cisco.com/c/dam/en/us/products/collateral/switches/nexus-9000-series-switches/white-paper-c11-734328.pdf

We’re not talking about Broadcom chip sets here!

Miercom is Cisco’s stooge for decades now why should anyone believe them..??

Uh…

You may need to invest in purchasing one of our latest Nexus 9K switches and perform the testing yourself. Once you have the results I hope you can share!

Have you heard about software? AWS?

So do the “new” 9Ks have custom ASIC?

Becuase I’m pretty sure there are 9Ks with Broadcom chipsets?

Can someone explain the reasons for having both? Which one should I buy?

Buy the ones with 3-5 times the route scale, better 100G density, and lower power consumption than competitor merchant based ToR solutions. Will be easy to find.

@Buffer the stuff – you got it!

Please don’t use titles like “Moving to Cloud? Intelligent Buffer Matters!” when this article has NOTHING to do with cloud. Cisco, you make great switches, ACI and the 9K is a good first step, (where is SDN for my Catalyst and ISR fleet?) but please don’t get sucked in to labeling everything with a “Cloud” moniker and think it suddenly makes it relevant to current web-scale technologies.

The whole point of Cloud is to be freed from underlying technical infrastructure, and give time back to your valuable engineering teams so they can work on adding business value. If my engineers spent even 10 minutes worrying about buffers in our cloud environment, they are failing at their job.

Second, I think you guys should talk to you CEO because apparantly Cloud is not what customers want:

http://www.crn.com.au/News/416333,cisco-ceo-8216cloud-is-not-what-customers-want8217.aspx

What a genius statement that one was.

OMG did Chuck Robbins actually say that out loud? Surely that’s a misquote? I know Cisco are struggling to remain relevant in the Cloud space (how’s intercloud going?) but really, burying your head in the sand won’t help Chuck.

@CraigE – you may want to go beyond the headline and read the full article!

This was exactly the point as this blog amply demonstrates. Cisco’s knowledge of cloud is laughable.

@Solution for 1990s – Did you read the article?

Yes, Rami, I did read the article. Let me quote if for those that have not:

“Don’t get distracted by some technology that’s got a lot of buzz and excitement about it. Because that’s when companies get into trouble.”

Whilst people who are yet to adopt the Cloud may indeed think it has “buzz and excitement”, those of us that have in fact made the migration understand it represents a game changing approach to the consumption of IT, and the only feasible approach to make a meaningful reduction in the amount of time engineering teams spend managing infrastructure.

I manage an IT department responsible for hundreds of Catalyst switches, and tens of ASR and ISR G2 routers. I have a networking team, comprised of 1 design group, 1 implementation group, and 1 operations group. Whilst the operations staff have responsibilities other than networking, it still takes a team of 11 people to maintain the network.

I also have around 900 servers running on a large Cloud provider, want to know how many network engineers I have managing that environment Rami? Well I’ll tell you, zero, it is all managed using the “infrastructure as code” methodology.

So let me ask you Rami, have you actually managed a meaningful workload running in the Cloud to genuinely appreciate the benefits it brings to an organization?

As I stated, I believe ACI and the 9K is a good first step, but you are focusing on the exact wrong area. The DC race has been run and won, companies will migrate to the cloud, the on-premise DC is dead, some people just have trouble accepting that.

Now the Enterprise network on the other hand, that is an environment that is crying out for a genuine SDN solution. Why do I still have engineers using a CLI to manage infrastructure in 2016? I can program a $12 Raspberry Pi using a SDK and an API, but my million dollar Cisco enterprise network doesn’t provide even the most basic of programability, which is a huge missed opportunity.

But I digress, this blog post is about buffers, so my original statement stands. The title “Moving to Cloud? Intelligent Buffer Matters!” is misleading, incorrect, and I’m sure Chuck Robbins would say you are using “buzz and excitement” keywords to promote the post, doesn’t that go 100% against what he said in the CRN article? You can’t have it both ways Rami.

@ Brent Watney – First of all thanks for being a Cisco customer.

We’re all in agreement that we want the benefits of cloud as stated by Chuck in the CRN article “The benefits of what they’re gaining from the cloud is what they want. No-one ever wanted SDN [software-defined networking] – they wanted automation simplicity, operational efficiency and lower cost of running their infrastructure. You have to stay focused on those things.”

Now, for the Enterprise infrastructure please take a look here http://www.cisco.com/c/en/us/solutions/enterprise-networks/cisco-digital-network-architecture.html?CAMPAIGN=dna&COUNTRY_SITE=us&POSITION=vanity&REFERRING_SITE=cisco%2Ecom&CREATIVE=vanity and hopefully will help in achieving your goals.

For data centers whether in the public or private cloud, the benefits are well understood for most customers. For customers who want the flexibility to use a mixed model with hybrid cloud or just deploy a private cloud, then you need a solution that can help them to move in that direction without worrying about the types of apps deployed, infrastructure resources, traffic pattern, etc. A solution that allows them to model apps once and deploy anywhere in the private or public DC with consistent policy. A solution that is point-n-click. A solution that enables customers with choices and accelerates business innovation. Please see the full announcement here http://newsroom.cisco.com/press-release-content?type=webcontent&articleId=1750136.

Brent, I’ll be happy to schedule a meeting to learn more about how we can help your organization to automate and improve operational efficiency. Thanks again for the dialog.

@Brent Watney – Thanks for forwarding the CRN link as it obviously got your attention. Did you have a chance to read the article and hear what was said?

And you’re right you don’t want your engineers to worry or spend anytime about the buffers or the infrastructure regardless of your environment because we got it under control!

@ Rami Rammaha

I will be attending Cisco Live in July, and hopefully there will be some more clarity around APIC-EM at that time. As I stated previously, I genuinely think ACI is a good first step, but at the same time I really am disappointed that the DC team were first to come up with a programmable infrastructure. The enterprise routing and switching team have been asleep at the wheel, and should never have been caught this far behind.

I hope with JC gone Cisco can move away from the Command and Control management style that has hampered innovation for so long. With agile methodologies providing so much value to both start-ups and visionary “legacy” companies alike, I’m hoping Cisco sees the value and ramps up the innovation velocity.

Anyways, keep up the good work, and hopefully you will shame the enterprise teams into finally releasing something!

I saw a mention of APIC-EM I see a start of the ‘put ciscoworks as part of the name of every product’ as it is I am constantliy having to explain ACI and APIC-EM have very little in common at this time. Just say no.