There is a popular citation when it comes to containers and networking:

“There’s no such thing as container networking!” (Kelsey Hightower).

There is just networking. However, everything connected to the network has changed a lot.

Nearly a decade ago, the Twelve Factor App methodology introduced guiding principles to develop and deploy modern applications. As technology evolved, these fundamental guidelines changed the way IT teams managed infrastructure, platforms and development environments. It is the source of new paradigms, such as DevOps, GitOps and the generalization of REST APIs. The introduction and rapid adoption of container orchestrators such as Kubernetes has further accelerated their practical application. Developers can now reap the benefits and take advantage of faster delivery, which has a direct impact on the business.

Cisco embraced these changes very early by actively participating in projects such as OpenDaylight and contributing to other major open source projects. Capturing technology transitions has always been part of Cisco’s DNA. As a combination of these innovations, we released Cisco ACI; that was 6 years ago.

But in this new cloud-native era, network and security are still major concerns when transitioning to modern datacenter architecture, especially when adding Linux containers in the picture. This is why the ACI engineering team is constantly innovating.

This article describes then current challenges that we see customers facing and how ACI Anywhere can help your organization figure them out.

Cloud Native and Current Challenges

If we zero in on the technical challenges, the traditional IT world where infrastructure, platform and middleware have clearly delimited functions, has now morphed into an environment that has blurred lines between them. More than infrastructure becoming defined as code, it is the way software distributed systems abstract infrastructure components and influence their requirements that significantly altered the nature of the datacenter. What used to rely on built-in hardware resiliency and scale-up design for critical applications is now underpinned by on-demand, scale-out and stateless distributed datacenter orchestrators, such as Kubernetes, Mesos or Nomad.

On the infrastructure side, traditional applications could already take advantage of more flexible components in their deployment processes, such as SDN and model driven networks. At the cross-path between these technologies, companies now realize that adapting their environment to new applications requirements is key to successfully running modern datacenters and maintaining a consistent approach to operations. It is expected that operational teams use the right tools in the right place.

Cisco ACI was developed from the ground up with that purpose in mind: To provide a flexible SDN layer supporting all type of applications and form factors. This includes bare-metal servers, hypervisors, VMs, and cloud native workloads. ACI has provided a Container Network Interface (CNI) plugin for Kubernetes platforms since 3.0. It serves as an extension of the network fabric into the container network, enabling traditional network teams, SREs and developers to interact with a single network and security API.

Just to list a few benefits, the ACI CNI currently delivers the following capabilities for network-oriented teams:

- Single overlay data-plane for containers, virtual machines and bare-metal servers.

- ACI whitelist security model extended to containers workloads. Containers are deployed securely by default.

- End-to-end network visibility and telemetry, including container network traffic.

- Native, distributed L4 Load-Balancer at the ToR layer.

For application and platform–oriented teams:

- Support for Kubernetes network principles such as namespace isolation and network policies

- IPAM

- Policy-based distributed Source NAT per application (or namespace, cluster…)

- Secure Service-Mesh deployment

- Automatic load-balancing configuration

- Additional Prometheus metric

Different Teams need Different Tools

As Public Cloud becomes a resource of choice for deploying micro-services based applications (Gartner, Survey Analysis: Container Adoption and Deployment, 2018), one of the main challenge that companies face is the way to adapt operations across cloud environments and private datacenters. They are typically living in two opposite worlds. Traditional datacenters tend to privilege stateful and monolithic applications where infrastructure paradigms are tied to slow change processes and flexibility is often constrained by stringent SLA requirements. Conversely operations in public cloud heavily rely on native provider services, consumable via APIs or generally automated with other DevOps tools and where availability is not under customers’ responsibility.

More importantly, this difference is equally significant when it comes to network and security management. Cloud-native automation tools are not only essential to manage workloads and cloud services, but also key to operate network services and security at scale. These tools provide the ability to programmatically manage constructs such as security groups, subnets, CIDR and interact with the network layer of container orchestrators. This gives cloud and platforms team great flexibility and agility and enables them to build first-class applications.

The private datacenter also experienced big changes in terms of automation adoption and tools, but the underlying infrastructure doesn’t systematically expose “cloud-native” or easily consumable APIs, which makes it difficult for the network team to be as efficient when they need for example to correlate physical network information with the abstracted container layer.

Another operational challenge is the management of the container logical boundary, which is usually that of the cluster. This often raises additional concerns on the network side. Stakeholders typically need to consider the following:

- How to enable secure communication for containers across multiple clusters and hosting environments?

- How to uniquely identify application flows that leave the cluster?

- Is there any common language or tool to manage connectivity and security policies between public clouds and private data centers?

ACI is the common networking layer for Cloud Native and Traditional Applications

ACI has the capabilities required to answer these questions. In ACI 4.1, we introduced support for public cloud, providing a common policy model for network connectivity and security. Cisco Multi-Site Orchestrator further added the ability to manage it all from a single interface, automating network stitching, security, VPN and tunnel configurations. Alongside the ACI CNI, these capabilities allow you to seamlessly control application flows between on-premises containers, public cloud workloads and all other form factors we currently support within the private datacenter.

However, this did not address the whole picture, since public-cloud based container solutions were not part of it. With the release of ACI 5.0, we’re extending our CNI capabilities to make containers that are deployed in the cloud first class citizens in the network.

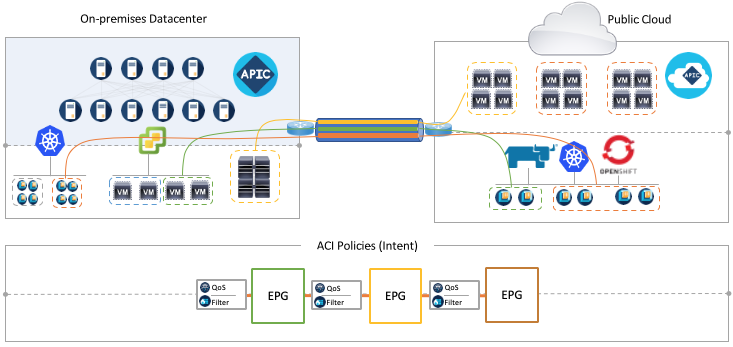

Let’s sum this up with a picture:

Leveraging a single abstracted policy model, ACI now allows you to mix and match any type of workloads (including containers) and attach them to an overlay network that is always hardware-optimized on premises while relying on software components in the cloud. The container cloud-networking solution is composed of the ACI CNI and the CSR 1000v virtual router. This hybrid architecture is underpinned by a single EVPN VXLAN control plane that enables a zero-trust security model by securely exchanging Endpoints information between remote locations. Whether Kubernetes clusters are running in the cloud or within the datacenter doesn’t matter anymore, they can live in different ACI managed domains without any compromise on agility and security. On-premises, the container integration is coupled with APIC, providing visibility and correlation with the physical network whereas in the cloud, the ACI 5.0 integration relies on Cloud APIC, programming cloud and container networking constructs using native policies. This allows network admins to access all relevant container information as well as enables end-to-end visibility from cloud to cloud or cloud to on-prem. It further delivers multi-layer security: at the application layer with the support of Kubernetes Network Policies and container EPG, at the physical network layer using ACI hardware, and in the Cloud by automating native security constructs.

From the application platform team’s standpoint, this new integration let them use their favorite cloud-native tools to interact with the Kubernetes API, while the ACI CNI takes care of the lower implementation details at various network and security layers. This includes automatic load-balancing for exposed services, NAT, EPGs, contracts and Kubernetes network policies enforcement. As these teams further adopt the Kubernetes Operator model, the ACI CNI will bring more automation capabilities. This will allow Kubernetes Operators constructs to securely interact with ACI and apply configuration changes based on Kubernetes resources lifecycle and a declarative model.

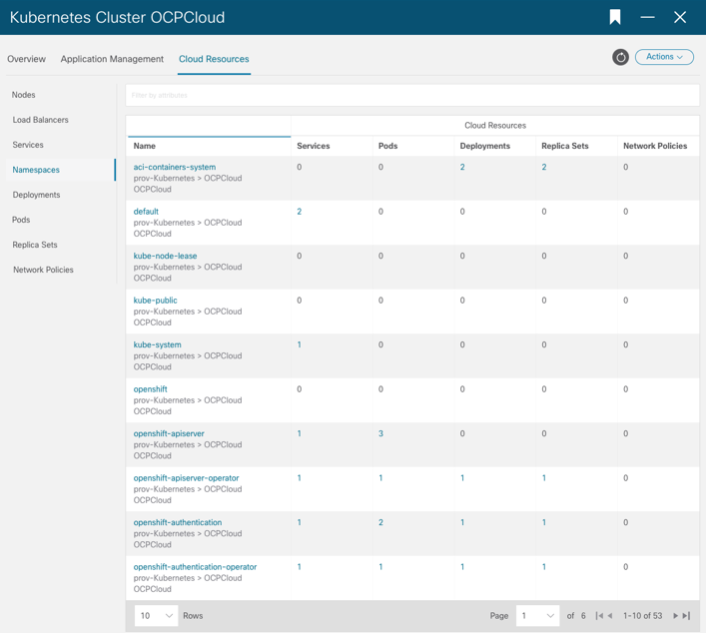

The following screenshot illustrates OpenShift container visibility in Cloud APIC:

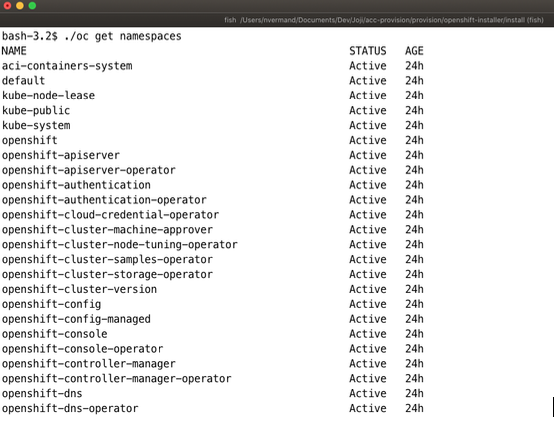

Similarly, the platform team traditionally displays namespace information using OpenShift command line:

There is obviously a plethora of Kubernetes distributions available, and many cloud providers. This means the combination grows exponentially. So, with ACI 5.0 we are excited to introduce the support for Red Hat OpenShift Container Platform (OCP) 4.3 IPI on AWS EC2. Over time, we’ll support more combinations, in alignment with our customer priorities.

There is obviously a plethora of Kubernetes distributions available, and many cloud providers. This means the combination grows exponentially. So, with ACI 5.0 we are excited to introduce the support for Red Hat OpenShift Container Platform (OCP) 4.3 IPI on AWS EC2. Over time, we’ll support more combinations, in alignment with our customer priorities.

Application Policy Infrastructure Controller (APIC) Release Notes

- Support for Red Hat Openshift 4.3 on OSP 13

- Running mixed form factor nodes (VM and bare–metal servers) within the same cluster

- More flexible mapping between Kubernetes clusters and ACI constructs

- Secure Istio service mesh deployment

- Better observability with the integration of Prometheus and Kiali

More Technical Details

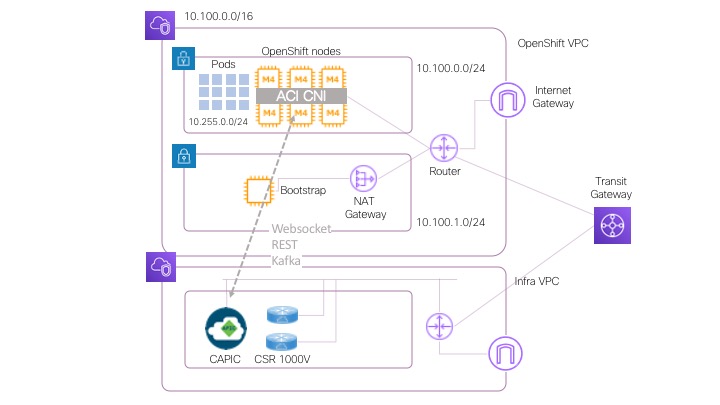

As mentioned previously, the initial version of the ACI cloud CNI (available as part of ACI 5.0) supports the deployment of Openshift 4.3 IPI in AWS. The IPI mode (Installer Provisioned Infrastructure) provides a full stack automation for OpenShift deployments. In this mode, the OpenShift installer controls all areas of the installation including infrastructure provisioning with an opinionated best practices deployment of OpenShift Container Platform.

The ACI CNI configuration is part of the OpenShift installer, enabling the integration of Cloud APIC and the OpenShift cluster at deployment time. This allows the cloud and network teams to get full visibility on the OpenShift constructs, with no extra work for the platform teams.

The cloud CNI configuration is a 3-step process, using the same tool and configuration method as for the traditional integration with APIC (on-prem).

- The Network or Cloud team executes acc-provision to generate the sample YAML configuration input for the CNI. There’s a new “cloud” flavor available. The CNI configuration file must be modified to fit your environment.

- The Network or Cloud team executes acc-provision with the –a flag to deploy constructs required on cloud APIC. This includes the ACI tenant used for the OpenShift cluster as well as the VRF, context profiles, EPGs, etc. As part of this process, acc-provision also generates the additional manifests and YAML configuration required by the OpenShift installer to deploy the CNI components (Namespace, Deployments, DaemonSets, etc).

- The Platform team enables the ACI CNI option in the Openshift installer configuration file and deploys the cluster.

Once the OpenShift installer has finished its job, the cloud or network team can create EPGs and contracts on Cloud APIC (or MSO) to define how cloud-based containers can communicate with the infrastructure back on-premises, with other VPCs or cloud provider environments. As usual, annotations must be used by the platform team to map the OpenShift constructs to the ACI EPGs.

The ACI Cloud CNI is deployed according to the following network architecture:

Summary

A modern and robust network is a network that supports both traditional and cloud-native applications. More importantly, it must provide a consistent approach to operations and the ability to manage hybrid policies. This is what ACI delivers with ACI 5.0, making containers in the cloud first class citizen. This new integration allows the network team to extend their control from ACI running in the datacenter to the container network running in the public cloud, taking advantage of the ACI Anywhere policies. It also enables the platform team to benefit from a powerful CNI that is seamlessly integrated with RedHat OpenShift IPI.

We are just getting started, you can expect many more features in that area in future ACI releases!

Additional Resources

- Cisco ACI and Kubernetes Integration Configuration TechNote

- Cisco ACI and OpenShift Integration Configuration TechNote

- Cisco ACI CNI Plugin for Red Hat OpenShift Container Platform Architecture and Design Guide

Cisco Live resources:

- Deploying Kubernetes in the Enterprise with Cisco ACI – BRKACI-2505

- Openshift and Cisco ACI Integration – BRKACI-3330

DEVNET resources: