At Cisco Live! in London this week, Cisco is demonstrating some enhancements to its Nexus 1000V virtual switch that greatly ease some of the challenges in deploying VXLAN in large scale cloud networks. VXLAN was designed to solve the problem of setting up traditional virtual networks (VLANs) in large multi-tenant cloud environments: the limited ID range for VLAN tags was quickly exhausted and a larger ID pool was needed for larger shared infrastructures. VXLAN thus becomes the foundation for a virtual network tunnel or virtual network overlays on top of physical networks. And unlike VLANs, VXLANs are designed to act as L2 virtual networks over L3 physical networks. For a more in-depth refresher on VXLAN, start here.

[Note: Join Cisco for a Live Announcement Webinar on Cloud Innovations on February 5: Register Here]

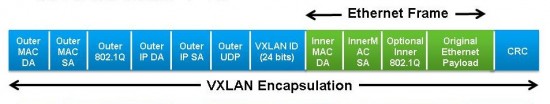

While VXLANs have certainly enabled a whole new level of scalability for virtual networks, one of the challenges in deploying VXLAN is its use of IP Multicast to implement the L2 over L3 network capability. Why is this? VXLAN is a MAC-in-IP encapsulation protocol in a UDP frame. The virtual switch that acts as the VXLAN termination (in Cisco’s case, the Nexus 1000V virtual switch) takes the L2 packet from the VM, wraps it in a L3 IP header, and sends it out over UDP. But the challenge is that there’s no way to determine which IP address should be used for the destination host (VXLAN termination point) at which the desired MAC address can be found. In other protocols, this can be accomplished within the network control plane and some MAC to IP mapping protocol, but the VXLAN specification indicates there should be no reliance on a control plane or a physical to virtual mapping table.

VXLAN thus resorts to IP Multicast (e.g., flooding and dynamic MAC-learning) to determine which IP address the packet should be sent to given only the destination MAC address. This leads to a lot of extra set-up, excessive network traffic, and some dependence on the physical network (there has to be an IP Multicast enabled core, e.g.). It hasn’t been ideal.

Now Cisco is introducing enhancements to its VXLAN implementation that overcome this traditional requirement for IP Multicast, two of which are on display this week at Cisco Live!

The first solution involves head-end software replication at the source virtual switch (a Nexus 1000V). Multiple packets are created for each possible IP address at which the destination MAC address can be found, and sent from the head-end of the VXLAN tunnel. All these replicated packets are then unicast to the possible destinations. This avoids the requirement to manage IP Multicast within the network core and flooding at the destination end of the network. This scenario works well when there are a relatively small number of IP addresses for VXLAN termination points.

The second solution relies on the control plane of the Nexus 1000V virtual switch, the Virtual Supervisor Module (VSM), to distribute the MAC locations of the VMs to the Nexus 1000V Virtual Ethernet Module (VEM, or the data plane), so that all packets can be sent in unicast mode. While this solution seemingly conflicts with the VXLAN design objective of not relying on a control plane, it provides an optimal solution within Nexus 1000V-based virtual network environments. Compatibility with other VXLAN implementations is maintained through IP Multicast, where required.

These two solutions should be available next quarter as we roll out updates to our Nexus 1000V virtual switch. In addition to these approaches to avoiding multicast in VXLAN, Cisco has in mind other solutions for later in the year that offer advantages in other VXLAN scenarios.

Since VXLAN is an IETF standard, the questions naturally arise, “Do these innovations violate the standard?”, “Are these proprietary extensions?”, “What if I’m not working in a purely Nexus 1000V environment?”. Fair enough. The point is that Cisco has a history of evolving standards while solving customer problems and maintaining compatibility with existing implementations. In this VXLAN case, we are still maintaining compatibility with other VXLAN solutions through multicast where desired. There’s no need to take advantage of these extensions in Nexus 1000V. In addition, Cisco has already made multiple proposals to the IETF to address the IP Multicast concerns. We hope the rest of the industry, which is generally cognizant of the difficulties with multicast in scaling out VXLAN networks, helps back these proposals. But until then, we will be helping our customers overcome one of the main VXLAN objections they have today.

And if you are in London, stop by the Cisco Data Center booth and check out the new demos and let us know what you think!

CONNECT WITH US