[Note: This is the second of a four-part series on the OpFlex protocol in Cisco ACI, how it enables an application-centric policy model, and why other SDN protocols do not. Part 1 | Part 3 | Part 4]

Following on from the first part of our series, this blog post takes a closer look at some of these architectural components of Cisco ACI and the VMware NSX software overlay solution to quantify the advantages of Cisco’s application-centric policies and demonstrate how the architecture supports greater scale and more robust IT automation.

As called for in the requirements listed in the previous section, Cisco ACI is an open architecture that includes the policy controller and policy repository (Cisco APIC), infrastructure nodes (network devices, virtual switches, network services, etc.) under Cisco APIC control, and a protocol communication between Cisco APIC and the infrastructure. For Cisco ACI, that protocol is OpFlex.

OpFlex was designed with the Cisco ACI policy model and cloud automation objectives in mind, including important features that other SDN protocols could not deliver. OpFlex supports the Cisco ACI approach of separating the application policy from the network and infrastructure, but not the control plane itself. This approach provides the desired centralization of policy management, allowing automation of the entire infrastructure without limiting scalability through a centralized control point or creating a single point of catastrophic failure. Through Cisco ACI and OpFlex, the control engines are distributed, essentially staying with the infrastructure nodes that enforce the policies.

This design makes sense when you consider that the ways that devices are configured and policies are enforced in network nodes vary considerably across vendors and models. These details would introduce complexity into the Cisco ACI policy model or result in policy that does not take full advantage of each device’s full capabilities. The likely result would be a proprietary system with controlled devices modeled on a small number of device types or a single vendor’s products.

OpFlex, in contrast, uses high-level application-oriented policy statements sent to network nodes, and it relies on those devices to determine how to implement the policies. After device configurations are completed, such as configuration of an application delivery controller (ADC) to support a particular service or configuration of a firewall with the proper policies between two tiers (virtual machines) of an application, communication between Cisco APIC and the infrastructure may be required only in the event of policy updates or fault scenarios.

OpFlex also is a bidirectional communication protocol. In addition to communication in the form of commands from the controller to the managed devices, useful state, performance, and health metrics can be sent from network nodes to either Cisco APIC or an external analytics engine (also called an observer in the OpFlex specification). Within Cisco ACI, this capability is integral to the 360 degree network visibility across physical and virtual infrastructure and to the reporting of health scores on an application-by-application basis.

For a more complete understanding of the OpFlex protocol, please refer to this whitepaper.

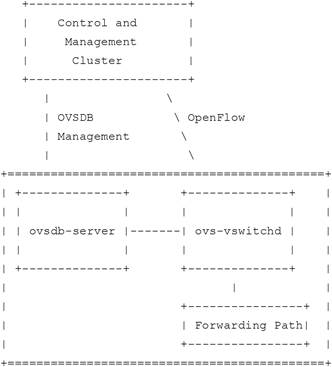

Let’s compare the Cisco ACI model to the VMware NSX architecture, which uses OpenFlow as the communication protocol to open virtual switches (OVS), along with the OVSDB management protocol. OVS is critical for forwarding traffic between virtual machines in the VMware NSX environment, and it is open to programmatic extension through OVSDB. OVS instances can then be managed from an SDN controller that supports both the OpenFlow and OVSDB protocols.

According to the OVSDB management protocol specification, the protocol is used to perform management and configuration operations on the OVS instance, and such operations occur “on a relatively long timescale” (taken to mean relatively infrequently). OVSDB does not perform management for individual data flows, leaving those instead to OpenFlow.

Figure: OVSDB Management Protocol and OpenFlow Combine to Support Virtual Switch Control

This design brings up a number of obvious comparisons to OpFlex and the Cisco ACI policy model. The OVSDB management protocol and OpFlex are both used to communicate from a centralized policy controller to individual network nodes, and both are primarily used to configure devices, create connections, etc., and not to manage individual applications or traffic flows.

But whereas OpFlex and Cisco ACI rely on individual devices to carry out the policies based on the capabilities of the device, whether it is a switch, ADC, or firewall, VMware NSX and OVSDB require continuous management commands from the controller to the device through OpenFlow. As noted earlier, this requirement can seriously hinder scalability, and the whole network can fail if the controller and management cluster fails. The controller design also becomes more complicated because the controller must manage a lot of low-level device- and flow-specific activities. And because OVSDB is based on the OVS architecture, every device must have essentially the same characteristics or internal interfaces as a virtual switch, eliminating the capability of VMware NSX to apply policies to other classes of devices.

In a blog from June, 2014, Ivan Pepelnjak points out some of the limitations of OpenFlow for managing overlay virtual networks in this manner (“Is OpenFlow the Best Tool for Overlay Virtual Networks?”)

It’s clear that OpFlex and the Cisco ACI policy model provide more flexibility, greater resiliency, and the possibility of much more robust cloud and application automation policy across a wider range of network infrastructure than the overlay network approach used by VMware NSX.

In the next part of this four-part series, we will have a closer look at ACI application policy that is built on top of OpFlex, and some of the benefits of this application-centric approach.

Opflex Protocol hyperlink is not working