My favorite part about making a living in the tech industry is that there is always something new to learn. When Amazon Web Services launched in 2006 it slowly began to change they way that people thought about compute infrastructure and software architectures. But what is the next technology on the horizon that is positioned to do the same?

Whether you call it Funtion-as-a-Service (FaaS) or Serverless — and I’ll explain the difference between those two terms in a moment — this new technology has a chance at forming the basis of the next round of innovation. But what is it and what makes it different? Who are all the players involved? For answers to those questions, you’ll have to keep reading.

FaaS vs Serverless: What It Is and Why It’s Different

This is a new enough techonology that you won’t even get a standard answer for what to call it, but when I attended Serverless Conf back in April most people agreed that “Serverless” refers to the application architecture because a software developer is free from thinking of the operations typically associated with servers. In a way, though, it’s a terrible term because there are indeed physical servers in the stack that somebody (typically a public cloud provider) has to attend to, it’s just not the developer who has to think about it any more.

Contrast that with “Function-as-a-Service”, or FaaS, which refers to the runtime on top of which a serverless architecture is built.

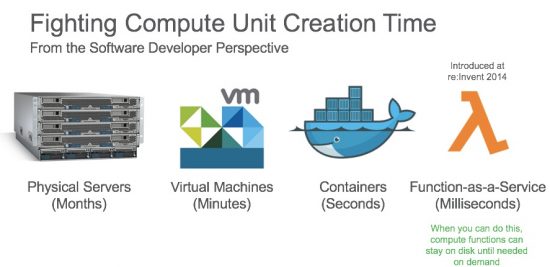

The best way to understand the difference is with a history lesson. For 30 years, as an industry we’ve been pushing to try to shrink the amount of time it takes to provision a unit of compute.

Back in the early 1990s when my career started, we only had physical servers available to us and we had to treat them as a scarce resource because to get a new one took months. In the late 1990s and early 2000s, virtualization changed that thinking. Although originally invented to get better utilization out of existing physical hardware, it took minutes to create a new virtual machine (VM). That lead to horizontal autoscaling and blue/green deployments that weren’t feasible. The underlying innovation there was the hypervisor, which made virtualization possible.

Currently containers are the rage. Using a different resource separation technique than hypervisors, container engines like Docker can spin up units of compute in seconds. This has led to the microservices revolution we see that has sped the turnaround time of new functionality tremendously.

FaaS is essentially an evolution of containers. Imagine having a dozen containers already spun up and with common language runtimes already installed on them like Python, Java, or NodeJS but without specific pieces of code to execute within those runtimes. When an event occurs such as writing a file to a file system or an API call, the FaaS engine loads the code into the pre-warmed language container, executes the code, and shuts down the container. There are scenarios where that container with code in it stays active so that it can more quickly respond to the next instance of a particular event, but those are the basics of how a FaaS runtime operates.

This scheme is very much like just-in-time manufacturing, but for container language environments. When no event is firing a particular piece of code (called a function), that code sits on disk and doesn’t clutter memory. The application architecture, then, becomes a set of functions that responds to a series of events which might chain upon one another. Function A responds to an API call and writes a field to a database. Function B responds to the field being written to the database and takes some other action.

So, FaaS is akin to the hypervisor or Docker engine in earlier technology waves and Serverless application architectures take advantage of FaaS the same way that VMs and containers did their underlying technologies.

In a future article, I’ll cover some common application design patterns that take advantage of this model, but the ability to load a function in a few milliseconds has a major impact on the way applications get assembled.

Players and Timelines

This is a young market but it has some familiar players:

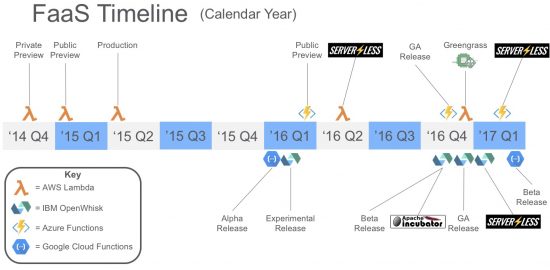

AWS invented this concept with their Lambda offering back in December of 2014 and they had the market to themselves for quite a while. Then Azure, IBM, and Google all announced competitors in CY Q1 ’16. Shortly after that, we started to see the first tooling vendor in this space emerge, the Serverless Framework, which offers command line tools that makes it easier for developers to create, test, and deploy functions.

Last November/December saw Azure and IBM go into GA releases and IBM choose to contribute their OpenWhisk offering to the Apache Foundation as an incubator project. Google followed along with their own beta status this past March and the team at the Serverless Framwork now offers support for all four platforms.

Next Time

Next time in this space, I’ll cover some common application architectures that take advantage of FaaS runtimes and the very loosely coupled events that bind the pieces together. This promises to be an exciting space to keep an eye on and, as we will see, might be the next disruptive technology for all kinds of uses.

Pete: Great write up on FaaS. Very helpful in articulating the value of this new functionality.