I have been privileged to be part of a very dedicated team of networking subject matter experts for the past 5 years in prepping and running the Ciscolive! event network. Before 2008 the network was installed and monitored by a commercial events networking company. However, it became clear with the number of Technical Marketing Engineers, Services Engineers and product developers on-site for Ciscolive! that we were well equipped to take on the responsibility in addition to our speaking responsibilities.

Planning

The preparation for Ciscolive! starts many months before the event with weekly, collaborative Webex sessions to discuss design criteria, venue particulars and product/feature configurations. Generally the teams align into core Route/Switch, Data Center, Security, Wireless, and Network Management/Operations, however we work collaboratively to design and implement the best show network possible. We have individuals that are part of our Cisco Remote Management Services (RMS) team, Video Surveillance and partner support from our CenturyLink Internet Service Provider and NetApp storage vendor.

A small part of the NOC staff will travel to the venue to survey the wireless, power and Internet service capabilities several months beforehand.

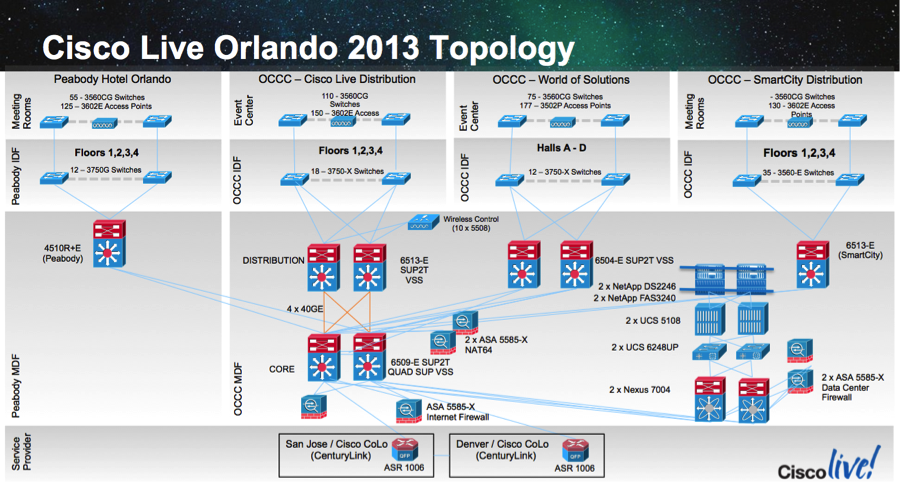

The following hardware was planned for Ciscolive! Orlando:

410 x Cisco 3602 Wireless Access Points [AIR-CAP3602I-x-K9] for OCCC and Peabody halls and conference rooms

180 x Cisco 3502P Wireless Access Points [AIR-CAP3502P-A-K9] with AIR-ANT2513NP-R stadium antennas for Keynote and World of Solutions halls

10 x Cisco 5508 Wireless LAN Controllers

200 x Catalyst 3560CG access switches and Catalyst 3750-X aggregation switches

6 x Catalyst 6504-E/6509-E/6513-E VSS distribution and core switches

6 x ASA5585-X

2 x Nexus 7000 data center switches

2 x UCS 5108 chassis, fully loaded

6 x UCS C-Series servers for Video Surveillance and other miscellaneous services

2 x NetApp controllers and disk trays (14 TB)

45 x Cisco Video Surveillance 4500 Series IP Cameras

24 x CTS video codecs for session recording

Staging

The event network is typically staged in San Jose. Much of the team engages remotely, some internationally, however the San Jose personnel are able to physically visit the equipment. We configure the distribution and access-layer devices, along with wireless LAN controllers and APs with as much practical staging configuration as possible. However, the event network can be dynamic, so we have to be prepared to make mass changes.

A month out Joe Clarke, a peer Distinguished Services Engineer, and I started to install and configure the various network management and support service applications. With an abundance of UCS compute and NetApp storage, we tend to run many Cisco management applications, including beta releases. However, some of the primary applications we deployed were:

| Cisco Prime LAN Management Solution (LMS) v4.2.3 | Element Management |

| Cisco Prime Infrastructure v1.3 | Element Management |

| Cisco Prime Infrastructure v2.0 beta | Beta Testing Element Management |

| Cisco Prime Network Registrar v8.1.2 | DNS/DHCP Services (x8 servers) |

| CiscoSecure Access Control Server (ACS) v5.4 | AAA/TACACS services (x2 servers) |

| Cisco Prime Network Analysis Module (NAM) Appliance | Packet Capture, Netflow |

| Cisco Unified Communications Manager | Unified Comms services |

| Cisco Prime Collaboration | Unified Comms monitoring/provisioning |

| Cisco Service Portal v9.4.1 R2 | Service Request Management (ordering) |

| Cisco Process Orchestrator v2.3.5 | Automation/Orchestration |

| FreeBSD | Syslog/NetFlow multiplexing and jumphost |

Other NOC SMEs in Security, Video Surveillance and Unified Collaboration also install their own monitoring and management applications in our UCS/NetApp environment.

The core network is staged and stays up until shipment. The wireless access points and access switches get staged, but then are shut down so we don’t have hundreds of devices crowding a lab. Typically 10 days from the start of Ciscolive! the equipment is shut down and loaded onto trucks for transport to the venue.

Onsite

Although the show was to run June 23 – 27, we needed to be “show-ready” by 3pm on Saturday (6/22) when registration opened. I arrived Tuesday (6/18) morning with the plan to start working in earnest on Wednesday. However, our 6-rack core network was already staged in a conference room and I thought you might as well jump in!

The conference room would later be reconfigured as a training breakout room. With ‘behind-the-scenes’ access I’m often impressed to see how the venues can bring in large, mobile power panels to serve the requirements of our equipment. We’re not talking simple 3-pin NEMA 5–15 outlets here!

Much of the wireless APs, antennas and pole mounts were still in boxes and needed to be broken down. We had nearly 2 Million square feet of floor-space in the Orange County Convention Center. This venue was 30% larger than the previous year’s San Diego Convention Center. There would be a LOT of walking to deploy and fix misconfigured equipment. We relied heavily on the Cisco Academy ‘Dream Team’ of around 40 college students to help us with configuration, device placement and troubleshooting. It was very rewarding to spend time mentoring students that are just getting started in their careers. Thanks Cisco Network Academy team!

For the next several days we worked long hours to ensure the network was configured to the topology seen below.

The main network was in the Orlando County Convention Center (OCCC), however we also had a network extension to the Peabody Hotel next-door for executive meetings. This was the first year we extended the wireless network into the World of Solutions and keynote halls.

Some breakout rooms had to be visited to troubleshoot why some CTS systems were not registering. Oftentimes the problems were related to port configuration changes, physical patching or incorrect trunk configuration. We also identified and remediated a potential spanning-tree loop before it became service-impacting to the show.

As mentioned earlier, it’s interesting to get ‘behind-the-scenes’ of the show. The main key-note area has some incredible audio/visual technology behind the main stage to serve the venue audio, ultra-wide screens, image magnification and demos.

Sometimes you have to go to lengths to ensure network connectivity. We had 8 wireless access points being served PoE and network connectivity by two Catalyst 3560CGs in the 2,600 seat Chapin Theatre. The APs and switches were on a catwalk allowing access to the stage lighting, 60 feet up. Anyone afraid of heights AND the dark need not apply.

Ken and I hit the catwalks to fix some switch connectivity.

Extreme console connectivity

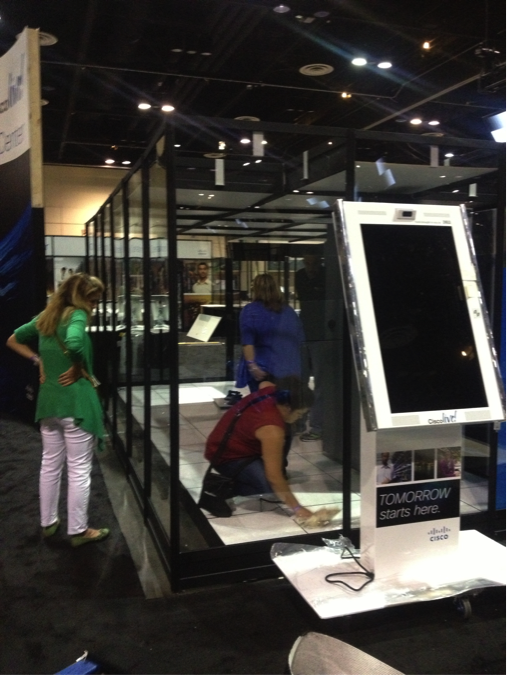

The 6 racks of equipment that was temporarily staged in a breakout room was our core network and compute/storage for the show network and services. Thursday evening we shut it all down and moved it into the World of Solutions in Hall B. Onsite attendees may recall this equipment was located in a miniature, enclosed data-center in the rear of the World of Solutions at the Network Operations Center. OCCC Hall B did not get air-conditioning until late Sunday in preparation for the show. During this time the loading bay doors were open to allow fork-lifts and other machinery to bring equipment in and out.

The racks were put into the ‘plexiglass data-center’ with air handlers because it was more economical to create a mini cooling eco-system, rather than turn on A/C for the whole World of Solutions with the loading bays open.

The last couple days before the show started was spent updating port configurations and ensuring our data collection was started. In events such as Ciscolive! the room configurations can be dynamic and require us to do switchport changes to accommodate different needs. We may need to add access points, digital signage or accommodate a router for VPN purposes. This year we used our Cisco Service Portal (CSP) and Cisco Process Orchestrator (CPO) tools from our Intelligent Automation portfolio. Rather than train up the Cisco Academy volunteers on Cisco Prime LMS/Infrastructure we optimized port configuration changes by developing a simplified and customized portal in CSP that linked with Cisco Process Orchestrator to automate the changes using Cisco Prime LMS.

The result was a system that extracted a list of switches we were managing, presenting them in a drop-down selector. The NOC staffer would then see a dynamically updated list of switch ports, based on the port count of a Catalyst 3560CG or 3750-X. They could select one or more ports, then a port profile, such as ‘Wired Access, Wireless AP, Digital Signage, IP Phone, IP Camera, or Trunk’. A list of VLANs was presented and a data-retrieval rule showed the application of the VLAN with name, service, location and IP subnet it served. Once the ‘order’ was submitted CSP would transfer the selections to CPO. CPO would build the ‘job’ that could be consumed by Cisco Prime LMS, then monitor the status of the job, finally sending an email to the NOS staff.

We deployed a couple hundred port changes throughout the event.

Circle back in a couple weeks when I’ll have another blog about what happened during and after Ciscolive!.

The event was amazing so is the Cisco people AND the network. Thanks for sharing the exerience.

Thank you for the compliment, Pablo! We’re honored to be a part of your experience.

I wonder why ISE wasn’t used…

Hello Anonymous,

ISE would have been used if we were providing differentiated services to users by their ‘classification’ – eg. Event Staff, Attendee, Speaker, World of Solutions Vendor, etc.

However, we did not break down traffic and access (policy) by user. That would have required device registration and assignment into policy groups (and AD groups). We were running the network pretty open as far as ‘registration’ went. You’ll note there was no captive portal/BBSM-like service in place. This was intentional.

We did secure the network by VLAN/subnet. So if you were on the wireless ‘ciscolive2013’ network (and the associated VLAN), you were restricted from the show/NOC service network by the ASA. Other networks were only allowed to the Internet and no where else.

CiscoSecure ACS was used to provide the AAA/TACACS+ logins to the routers/switches and centralized login authentication to the various management applications that could source AAA/TACACS+.

it all looks amazing when are you likely to come and make the same presentation in africa areas like zimbabwe , any plans in that direction

Hi Stephen,

Here is the list of upcoming Ciscolive! events.

http://www.ciscolive.com/global/

I don’t see Zimbabwe, Africa in the list, but Milan, Italy and Melbourne, Australia are upcoming.

Are there any better picture of the topology? The one in the post is quite tiny 🙂

Apologies for that, Erik! There was a finite amount of screen real-estate to work with. 🙂

I updated the blog post. If you look at the image now, right below is a hyperlink to a PDF of the network topology. Hope that helps!

Thanks for the article.

I believe your tool list table should note that your LMS was 4.2.3 instead of 1.3.

Indeed! Thanks for the catch, Marvin. I guess ‘cut and paste’ tripped me up again. 🙂

Fixed in the blog.